Airflow kubernetes operator9/13/2023

This means that you do not need to build your own image when you are first starting out. This is a robust Docker image that is up to date and also has an automated build set up, pushing images to Dockerhub. Most people seem to use puckel/docker-airflow. Docker imagesįirst thing you need is a Docker image that packages Airflow. In that case, you'll probably want Flower (a UI for Celery) and you need a queue, like RabbitMQ or Redis. If you want to distribute workers, you may want to use the CeleryExecutor. The main components are the scheduler, the webserver, and workers. Running Airflow on KubernetesĪirflow is implemented in a modular way. I think eventually this can replace the CeleryExecutor for many installations.ĭocumentation for the new Operator can be found here. So your workers end up hosting the combination of all dependencies of all your DAGs. Previously, if your task requires some python library or other dependency, you'll need to install that on the workers. The cool thing about this Operator will be that you can define custom Docker images per task. In the next release of Airflow (1.10), a new Operator will be introduced that leads to a better, native integration of Airflow with Kubernetes. KubernetesPodOperator (coming in 1.10)Ī subset of functionality will be released earlier, according to AIRFLOW-1517. This is still work in progress so deploying it should probably not be done in production. Progress can be tracked in Jira ( AIRFLOW-1314).ĭevelopment is being done in a fork of Airflow at bloomberg/airflow. The wiki contains a discussion about what this will look like, though the pages haven't been updated in a while. Work is in progress that should lead to native support by Airflow for scheduling jobs on Kubernetes. The Helm chart mentioned below does this. However, you can also deploy your Celery workers on Kubernetes. The simplest way to achieve this right now, is by using the kubectl commandline utility (in a BashOperator) or the python sdk. The reason that I make this distinction is that you typically need to perform some different steps for each scenario. And of course you can run them in Kubernetes and deploy to Kubernetes as well. Or you can host them on Kubernetes, but deploy somewhere else, like on a VM. you can use Jenkins or Gitlab (buildservers) on a VM, but use them to deploy on Kubernetes. You can actually replace Airflow with X, and you will see this pattern all the time. Using Airflow to schedule jobs on Kubernetes.There are some related, but different scenarios: Please do not hesitate to provide updates, suggestions, fixes etc. Because things move quickly, I've decided to put this on Github rather than in a blog post, so it can be easily updated. Here I write down what I've found, in the hope that it is helpful to others. Also, there are many forks and abandoned scripts and repositories. While there are reports of people using them together, I could not find any comprehensive guide or tutorial. This repo has been donated to Apache foundation.Recently I spend quite some time diving into Airflow and Kubernetes. Refer to the Design and Development Guide. Supports sharing of the AirflowBase across mulitple AirflowClusters.

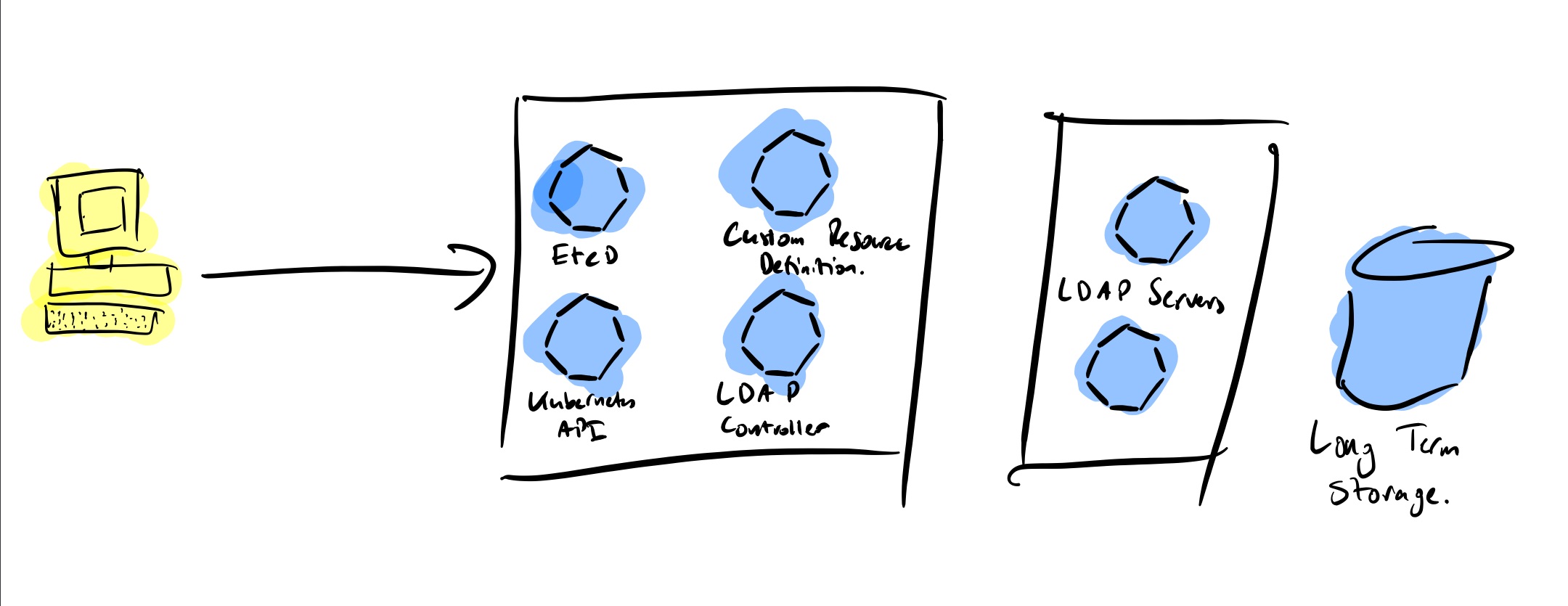

Supports creation of Airflow schedulers with different Executors.Restores managed Kubernetes resources that are deleted.Updates the corresponding Kubernetes resources when the AirflowBase or AirflowCluster specification changes.Creates and manages the necessary Kubernetes resources for an Airflow deployment.The Airflow Operator performs these jobs: Using the Airflow Operator, an Airflow cluster is split into 2 parts represented by the AirflowBase and AirflowCluster custom resources. Apache Airflow is a platform to programmatically author, schedule and monitor workflows. Get started quickly with the Airflow Operator using the Quick Start Guideįor more information check the Design and detailed User Guide Airflow Operator OverviewĪirflow Operator is a custom Kubernetes operator that makes it easy to deploy and manage Apache Airflow on Kubernetes. One Click Deployment from Google Cloud Marketplace to your GKE cluster Uses 4.0.x of Redis (for celery operator).Uses 1.9 of Airflow (1.10.1+ for k8s executor).Backward compatibility of the APIs is not guaranteed for alpha releases. The Airflow Operator is still under active development and has not been extensively tested in production environment. Join Airflow Slack and the dedicated #sig-kubernetes channel.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed